If you use AI for an EA (automated trading), it should win more, right?

No. In FX automated trading, using AI can actually increase risk.

That’s because AI can easily produce “settings that look like they work,” and it often leads to overfitting and poor reproducibility.

So should I avoid anything that says “AI-powered EA”?

Conclusion: what you should avoid is not “AI,” but an EA you can’t verify.

What matters isn’t whether it uses AI, but the verification process and reproducibility (does it behave the same on a live account?).

Is AI Automated Trading Dangerous? What You Should Know Before Believing “AI = Profits”

When you see the word “AI” along with a textbook-smooth, up-only backtest (BT), it’s easy to think, “This might be the holy grail.”

But behind results that look too perfect, you’ll often find exaggeration—and EAs that only look strong in backtests.

Even if a short forward test (a brief live run) looks good, it can still break down on a real-money account. That’s not rare.

Related article: What Is an EA? How FX Automated Trading Works and How to Choose One (Complete Guide)

Bottom line: AI doesn’t automatically make an EA profitable

AI trading (machine learning and large language models/LLMs) is appealing.

But using AI alone will not make you profitable.

In fact, if you get the testing process wrong—or if you fail to design for “same inputs, same behavior” (reproducibility)—AI-based systems can be riskier than standard EAs.

Three reasons AI trading becomes risky so easily

- Machine-learning EAs can be made to “look right” very easily

Depending on how you combine features (input data), you can create settings that fit only the past.

That makes overfitting common, leading to the classic problem: “perfect in the past, useless in the future.” - LLM-connected EAs are hard to reproduce and validate in backtests

LLMs often rely on external APIs. That means outputs may not be identical every time (non-deterministic),

and they’re affected by latency, rate limits, and errors.

As a result, it’s difficult to recreate “the same conditions as live trading” inside a backtest. - Even “LLM-enabled EAs” may use different logic in backtests vs live trading

This is critical: in MT5 Strategy Tester (backtesting), external API calls are often restricted, so many systems can’t reproduce LLM behavior as-is.

In that case, the backtest may run on a replacement logic (tester-only).

If that replacement logic is something like grid or martingale, performance can look “hard to lose” in the short term—but it can carry serious blow-up risk when the market spikes.

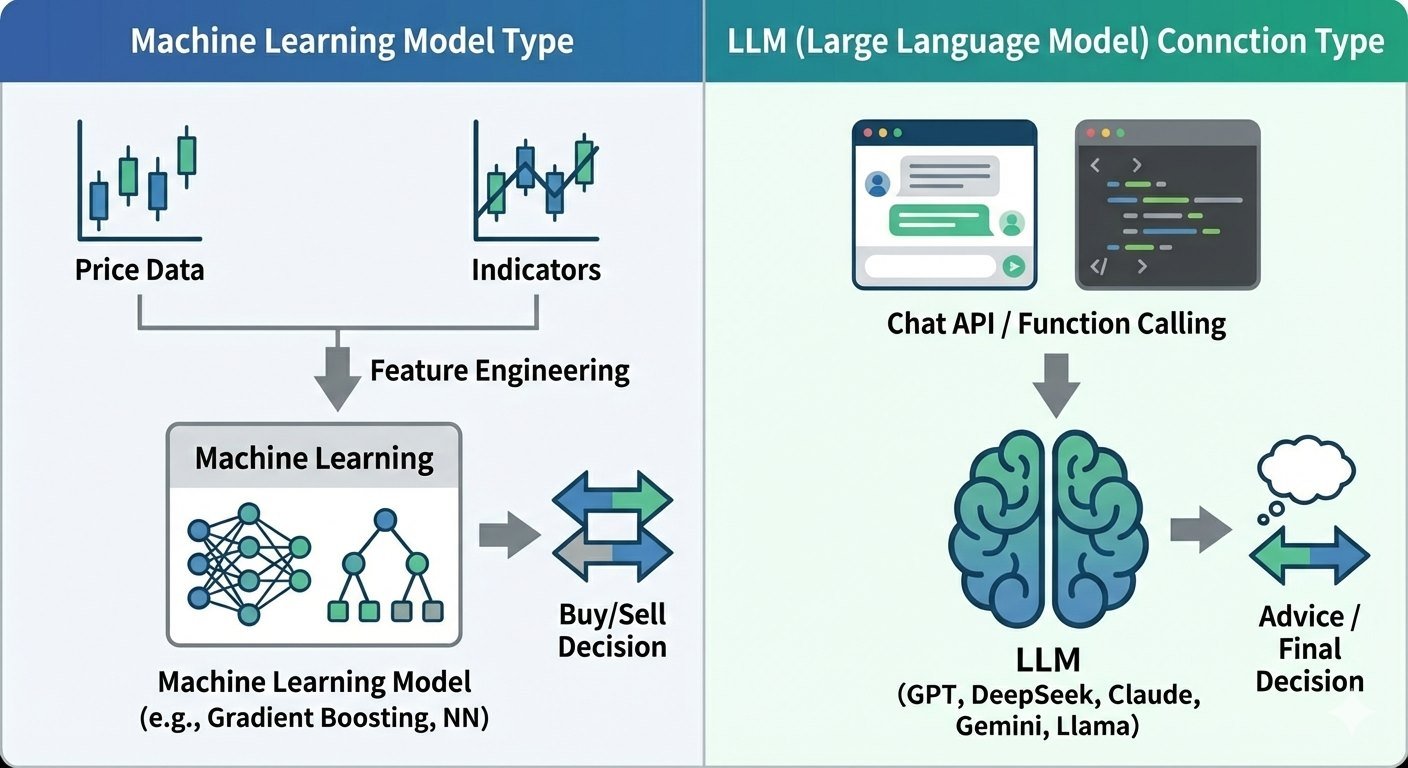

Two Types of AI Automated Trading: Machine Learning EAs vs LLM-Integrated EAs

AI-based automated trading (EAs) generally falls into two categories.

They may both “use AI,” but the mechanism and difficulty of verification are completely different.

Machine learning model type (machine learning EA)

This type builds features (input data) from price data, technical indicators, economic events, and more,

then uses a trained model (e.g., gradient boosting, neural networks) to generate trade decisions.

Watch out: machine learning models can adapt too well to past market behavior, so overfitting is common—they can fail badly when conditions change.

LLM (large language model) integration type (API-connected EA)

This type connects to LLMs like OpenAI’s GPT, DeepSeek, or Google Gemini via chat APIs,

and uses the model to summarize the market, propose signals, or assist (or even make) trade decisions.

Watch out: external APIs come with rate limits, latency, and output variance (non-determinism), so it’s hard to reproduce real-live conditions in MT5 backtests, which makes validation difficult.

Standard EAs vs Machine Learning EAs: How They Differ (Logic, Optimization, Reproducibility)

The key difference between a “standard rule-based EA (indicator rules)” and a “machine learning EA (model-based)” isn’t really how they win.

It’s how they’re built—and what gets optimized.

Once you understand this, you’ll spot “too-perfect” backtests much more easily.

| Aspect | Standard EA (indicator rules) | Machine learning EA (features → model) |

|---|---|---|

| Core logic | Rule-based conditions from indicator signals | A statistical model learns relationships from features |

| What gets optimized | Indicator parameters and thresholds | Feature design, preprocessing, model structure, hyperparameters |

| Overfitting risk | Exists (too much parameter tuning) | Higher (more knobs to tune; easier to find “lucky” settings) |

| Validation difficulty | Easier to understand and track | Must watch for data leakage and flawed splitting methods |

| Reproducibility | Easier with fixed rules | Can vary due to training data, seeds, and implementation differences |

Note: Machine learning EAs have many more “knobs” you can tune. When everything lines up, they look extremely strong—but they also pick up lucky coincidences more easily. Next, we’ll explain why overfitting happens so often.

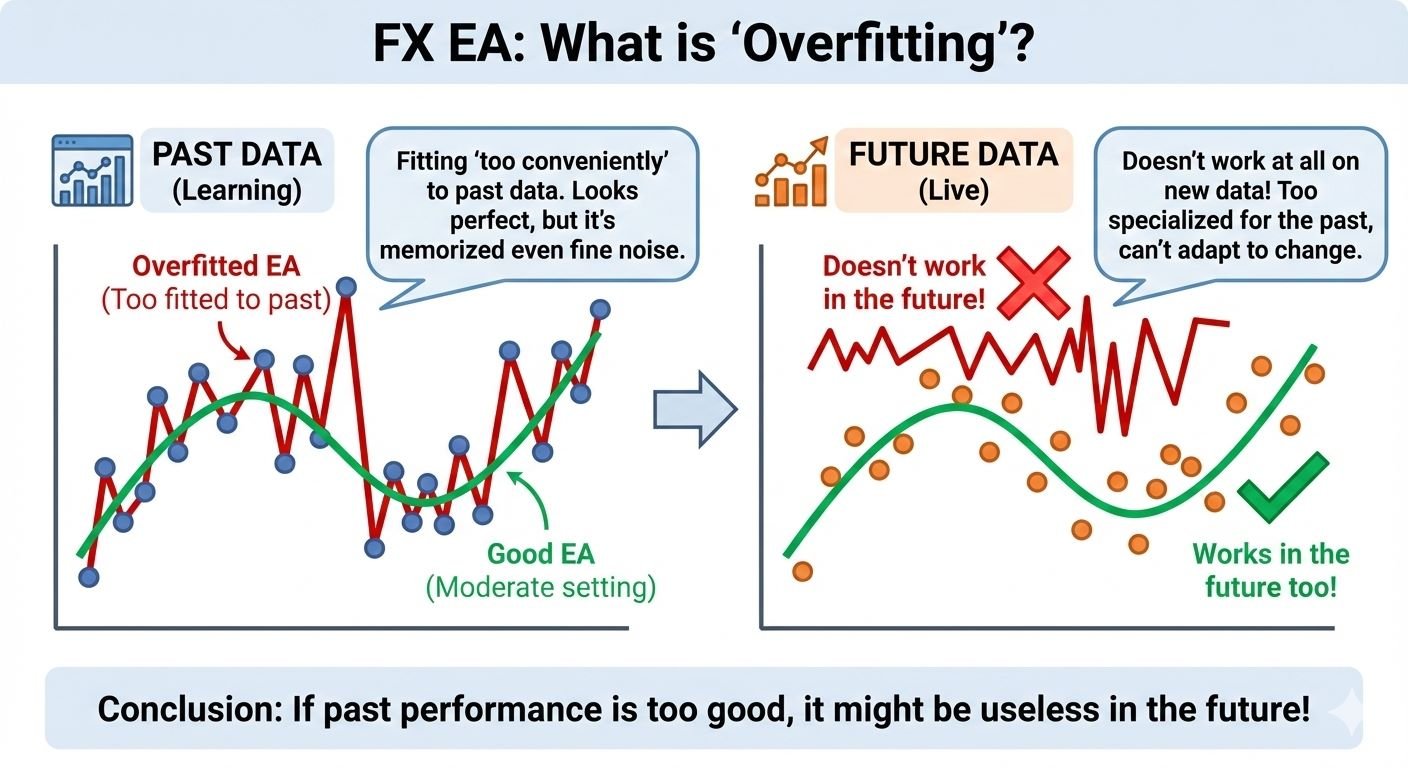

Why Machine Learning EAs Are Prone to Overfitting

Overfitting means a system matches past data too perfectly, but fails on new, unseen data.

Related:EA Overfitting (Over-Optimization): How to Detect It Before You Buy

A simple analogy: it’s like memorizing answer keys for past exams.

You can score full marks on the old questions, but change the wording slightly and your score collapses.

Markets work the same way. If the model “learns” quirks that only existed in a specific past period, it tends to fall apart in forward testing.

Overfitting is “common by nature”: machine learning models can fit too well

In machine learning, overfitting isn’t a rare bug—it’s more like a natural outcome that happens easily.

The reason is simple: a machine learning model keeps learning to improve performance, so it can end up capturing not only true patterns (repeatable signals) but also random noise.

Here, a machine learning model is basically a bundle of rules that maps inputs (features) to a trading decision.

A classic indicator EA runs on human-defined conditions like “buy when RSI is below 30.”

A machine learning model, on the other hand, searches past data and learns combinations of conditions that happened to work.

With a standard indicator EA, optimization usually means tuning a limited set of parameters—like “moving average period” or “RSI threshold.”

A machine learning model can tune far more: which features to use, how to transform them, model structure, weights, and more.

It can also handle complex combinations (e.g., multiple indicators × time-of-day × volatility × correlations).

That flexibility is powerful—but it also means the model can create rules that fit the past almost perfectly.

In other words, it may “learn” noise to boost historical results. That’s why overfitting happens so easily.

Three key reasons overfitting happens in machine learning EAs (beginner-friendly)

- The model can “fit” the past too well

Machine learning models can express more complex conditions than simple human-made rules. That’s a strength—but it also means they can create past-only rules that don’t survive the future. - More trials = more “lucky hits”

As you test more combinations—features, preprocessing, models, parameters—you increase the chance of finding a setup that looks great by pure luck.

Sometimes you didn’t find something truly robust—you just picked the best outcome from a huge lottery. - Bad validation can inflate results easily

Data leakage (future info sneaks in), breaking time order in evaluation, repeatedly testing on the same period—these mistakes can make a backtest look far better than reality.

Because markets are time-series data, validation design matters more than most beginners expect.

Extra note: Markets change as interest rates, volatility, and participants shift (non-stationary behavior). That’s why “worked before” does not guarantee “works later.”

Common signs of overfitting

- The backtest looks unrealistically perfect (too smooth; drawdown too small)

- Small setting changes cause huge performance swings (not robust)

- It breaks when you change the period or symbol (weak reproducibility)

- Numbers look great but no one can explain why (likely luck-fit)

LLM-Integrated EAs (GPT, etc.): 5 Reasons MT5 Backtests Struggle to Validate Them

In recent years, more EAs claim to connect to LLM APIs like OpenAI’s GPT, DeepSeek, Anthropic Claude, Google Gemini, and Meta’s Llama family.

They use chat APIs or function calling (Tools / Function calling) to let an LLM suggest signals or handle part of the decision-making.

But here’s the foundation: an LLM is a language model (a system that generates text).

It does not reliably calculate and guarantee that a strategy has positive expectancy.

So if an LLM suggests trades, you still must validate them separately with long-term backtests, forward tests, and realistic trading costs.

And in practice, many LLM setups rely on external APIs. In MT5 Strategy Tester, it’s hard to reproduce live conditions—external communication behavior, latency, and rate limits—so backtesting often can’t validate the real edge.

Basic workflow of an LLM-integrated EA (overview)

MT5 / EA ↓ (Get price data) Preprocessing (indicator calculations / regime checks) ↓ (API request) LLM (returns text/JSON: suggestions, reasons, conditions) ↓ (Parse) EA-side mechanical checks (rules / risk / consistency) ↓ (Final decision) Order execution (lot size, SL/TP, max slippage, etc.) ↓ Monitoring (fills, error handling, logging, fallback)

Key point: For safety and reproducibility, don’t send LLM output straight into order execution.

A safer design is: “LLM suggests” → “EA applies fixed rules and risk limits before executing.”

Note 1: LLMs are not designed to prove statistical edge

As explained above, an LLM isn’t a tool that automatically produces reliable evidence through proper statistical tests or expectancy calculations.

For example, answering “What happens to P&L and drawdown if this rule trades 1,000 times?” in a correct, reproducible way is the domain of backtesting and statistics—not text generation.

LLMs can sound convincing, but a good explanation does not equal positive expectancy.

Treat LLM output as an idea, not proof.

Note 2: Reproducibility issues (outputs can change under the same inputs)

Small prompt changes, settings (like temperature), and model updates can change the answer.

If the decision changes over time, results change too—making it harder to run a clean, repeatable verification under identical conditions.

Note 3: In live trading, latency, errors, and price mismatch can be fatal

If you insert an external API, latency and connection errors will happen.

In an EA, even a few seconds can change outcomes—especially for scalping systems.

- API latency: while waiting seconds, price moves and the entry conditions can break

- Timeouts/outages: decisions go missing, making execution unstable

- Backtest gap: the tester “jumps through time,” so it’s hard to reproduce the same latency impact

Note 4: Output safety (hallucinations / format breaks / runaway behavior)

LLMs sometimes make up answers when they don’t know (hallucinations).

Even with JSON output, you can still get missing fields, wrong types, or unexpected values.

In trading, that can directly cause losses.

Note 5: Operating cost and changing rules (pricing, rate limits, sudden behavior changes)

LLM APIs can change pricing, rate limits, or specs during operation.

That can lead to “suddenly more expensive,” “suddenly slower,” or “hits a limit and stops.”

API key handling also matters. Hard-coding keys inside an EA creates a serious leakage risk.

In the worst case, someone can abuse your key and cause billing damage or service suspension.

In short, LLM-integrated EAs can be great for idea generation and decision support—but they carry major pitfalls around edge validation, reproducibility, and operational stability.

That’s why you should always remember: LLMs are powerful tools, not magic profit engines.

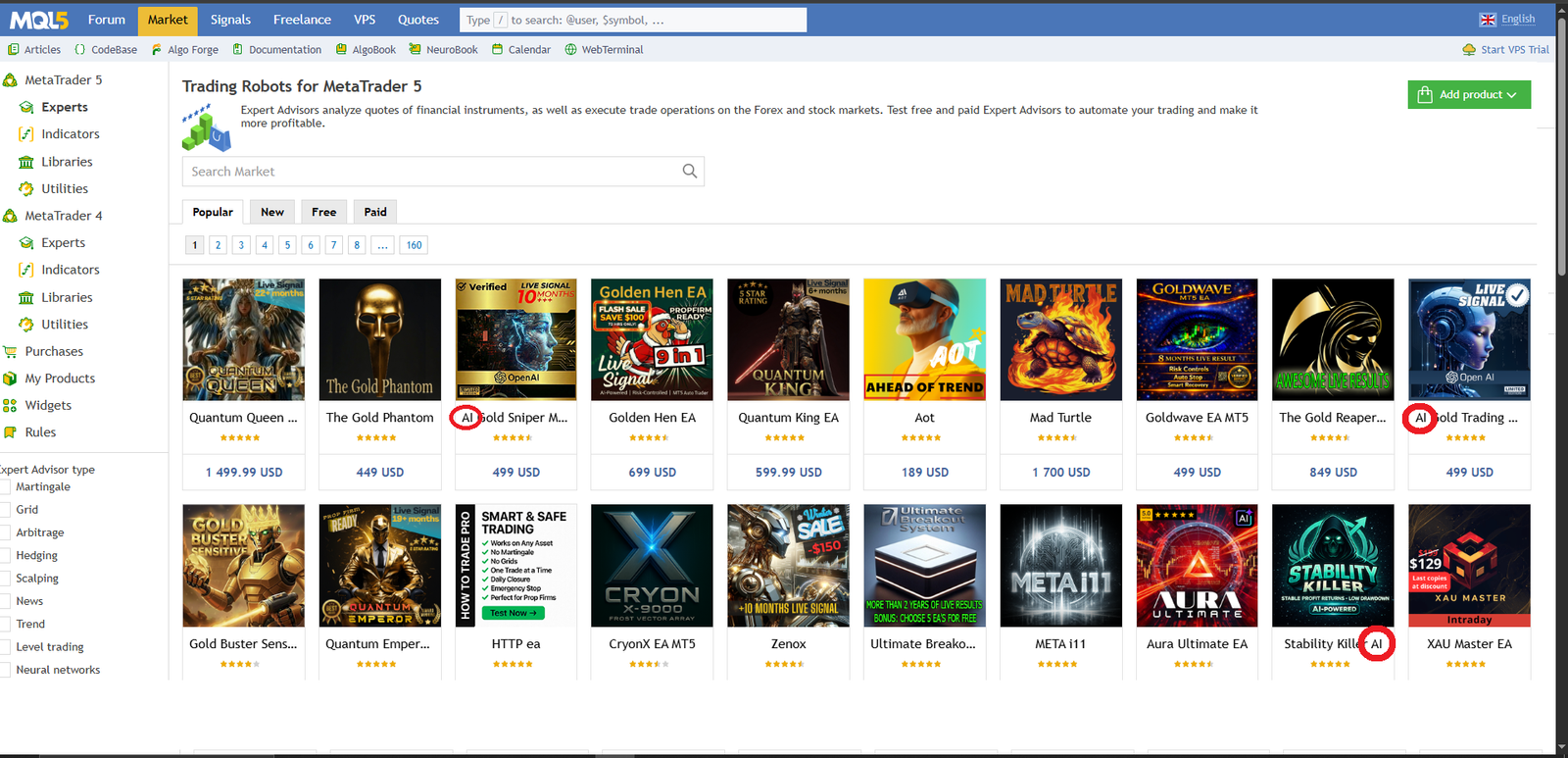

Be Careful With “AI/LLM-Connected EAs” in the MQL5 Market: Why Backtests May Not Be Trustworthy

Recently, more products in the MQL5 Market claim “GPT,” “DeepSeek,” or other LLM API connections.

But the key point is this: “AI/LLM-connected” is not evidence of profitability—transparency in verification is everything.

Because external API integration is hard to reproduce under identical live conditions in MT5 Strategy Tester,

there’s also a real possibility that the backtest is generated using a tester-only replacement logic.

Why “LLM integration can’t be verified in MT5 backtests” is so common

- External HTTP/HTTPS communication is restricted or unstable

The Strategy Tester processes historical data at high speed, but external APIs assume real-time communication.

Speed, limits, and error behavior won’t match live trading. - It’s difficult to reproduce the impact of latency (delay)

In live trading, prices can move during a few seconds—or even 10+ seconds—of API response time.

But the tester “jumps through time,” so the same latency impact is often not reflected. - Real-world constraints don’t appear: rate limits, pricing, outages

Live trading can face “slow due to congestion,” “temporary downtime,” or “hit request limits.”

Backtest results often ignore these operational realities.

Because of this, if a developer designs the EA so that “the tester can’t (or shouldn’t) use external APIs,”

they often end up separating backtest logic and live logic.

If that separation isn’t clearly explained, buyers can easily assume they’re seeing results from the same strategy—when they may not be.

Warning: It’s easy to make an EA look strong by using a different strategy in backtests

Even if the product claims “connected to AI,” if the backtest runs a grid / martingale-type strategy,

it’s relatively easy to produce results that look hard to lose in the short term—making it seem like AI is “predicting the market perfectly.”

If you see an equity curve that is too smooth with an unrealistically small drawdown, be cautious.

It may not be “AI power,” but rather averaging down, lot-multiplying, and position stacking shaping the curve.

Especially dangerous: Don’t get fooled by how “good” grid and martingale can look

Grid and martingale strategies can be made to look stable short-term,

but they can also build large floating losses through accumulated positions—and become fatal during a sudden market move.

When volatility spikes and spreads widen at the same time, the worst-case outcome can be account wipeout.

Related articles:

Martingale EAs: Why They Blow Up (Backtest Proof + Checklist)

Why Grid Forex EAs Blow Up: Hidden Drawdowns + Red Flags (Self-Made EA Test)

Key points you should understand first

- “AI/LLM” does not mean “profitable”

LLMs can generate text well, but they don’t guarantee positive expectancy. Profitability still requires real testing and proof. - A backtest is only meaningful if you know what logic actually ran

If LLM integration can’t be reproduced in the tester, the backtest may be running different logic. If that’s unclear, it’s a major red flag. - Who has final control over trade decisions?

Whether “the LLM decides” or “the EA’s fixed rules decide” dramatically changes reproducibility and safety.

How to Identify a Good EA (These Rules Apply Whether It Uses AI or Not)

Here are core criteria for evaluating an EA, regardless of whether it uses AI.

A common beginner mistake is getting pulled in by flashy numbers—win rate, a smooth up-only curve, or short-term explosive returns.

But EAs that survive long-term are usually the opposite: not flashy, but robust and hard to break.

These checks are not “AI/LLM is safe” or “AI/LLM is dangerous.”

The point is simpler: verification quality and system design are what matter.

1. Does it hold up in long-term backtests (can it handle regime changes?)

Markets shift between trends, ranges, high volatility, and low volatility.

A good EA tends to avoid “only works in one lucky period,” and is less likely to take a fatal hit when conditions change.

- Even if the equity curve rises, is there any blow-up-level drawdown (DD)?

- Is performance dependent on a single “golden period” (over-reliance on a specific window)?

- During volatility spikes or regime shifts, does loss size stay controlled?

- If you test other symbols, is the result at least “not terrible”?

Related:How to Read MT5 Backtests: Verify EA Risk with Equity DD & Orders/Deals

2. Is there a real-account forward test (real money + continuous reporting)?

A backtest is a simulation. It cannot fully reproduce slippage, rejected fills, spread widening, or latency.

That’s why an ongoing forward test on a real account (ideally tracked by a third party) matters.

- Is it a real-money account, not a demo? (Real is better.)

- Is it updated consistently, not only shown for a short period?

- Is it published in a way that’s hard to manipulate (e.g., via Myfxbook)?

Related:How to Read Myfxbook: Spot Risky EAs (Balance vs Equity, Margin Spikes, Trade History)

3. Is the risk/reward profile healthy? (More important than win rate)

What matters is not win rate alone, but the balance of win rate × average win × average loss.

A high win rate can still be dangerous if losses are large (small wins, occasional huge loss).

- Are average losses unusually large? (Small wins + rare big losses is a danger sign.)

- Even with a good PF, are DD and the profit/loss distribution distorted?

- Does it look like the system “cuts losses too late”?

- Does it use tactics like averaging down (grid) or martingale that can cause one-hit catastrophic losses?

4. Is it reproducible? (Scalping EAs need extra caution)

Scalping EAs that target “just a few pips” are highly sensitive to trading conditions.

Spread, commissions, and slippage can destroy performance, and broker execution method and latency also matter.

It’s common for backtest or demo results to fail to reproduce on real accounts, so be cautious.

Related article: Scalping EAs: Why They Often Fail on Live Accounts (Costs, Slippage, Execution)

Summary

AI is a powerful tool, but it’s not a master key.

Machine learning EAs are prone to overfitting, and LLM-connected EAs are difficult to validate and make reproducible in real operations.

That’s why the right evaluation axis isn’t “AI or not,” but the fundamentals:

long-term backtests, live forward tests, healthy risk/reward, and reproducibility.

Don’t be swayed by flashy marketing.

Prioritize transparent verification and design that doesn’t break easily.

That’s the shortest path to identifying an EA that can survive over the long run—AI or not.

Related:EA Robustness Explained: How to Choose a Forex Trading Robot That Won’t Blow Up

FAQ

- Q. If I use AI, will I definitely become profitable?

- A. No. If the design and verification are weak, AI can amplify risk. Make sure you understand the source of expectancy and confirm reproducibility.

- Q. Can I backtest an LLM-connected EA?

- A. In standard environments, it’s often difficult to reproduce external API behavior exactly, so direct validation can be hard. Clear disclosure of replacement logic and a transparent verification process is essential.

- Q. What is the single most important thing when choosing an EA?

- A. Not whether it uses AI, but consistent proof across three basics: long-term backtests, live forward tests, and a healthy risk/reward profile (RR). Robustness should be your top priority.