I found an EA with a 90%+ win rate in the backtest. The equity curve looks great and the drawdown is tiny.

I should buy it right now, right?

Bottom line: it’s safer to not buy yet.

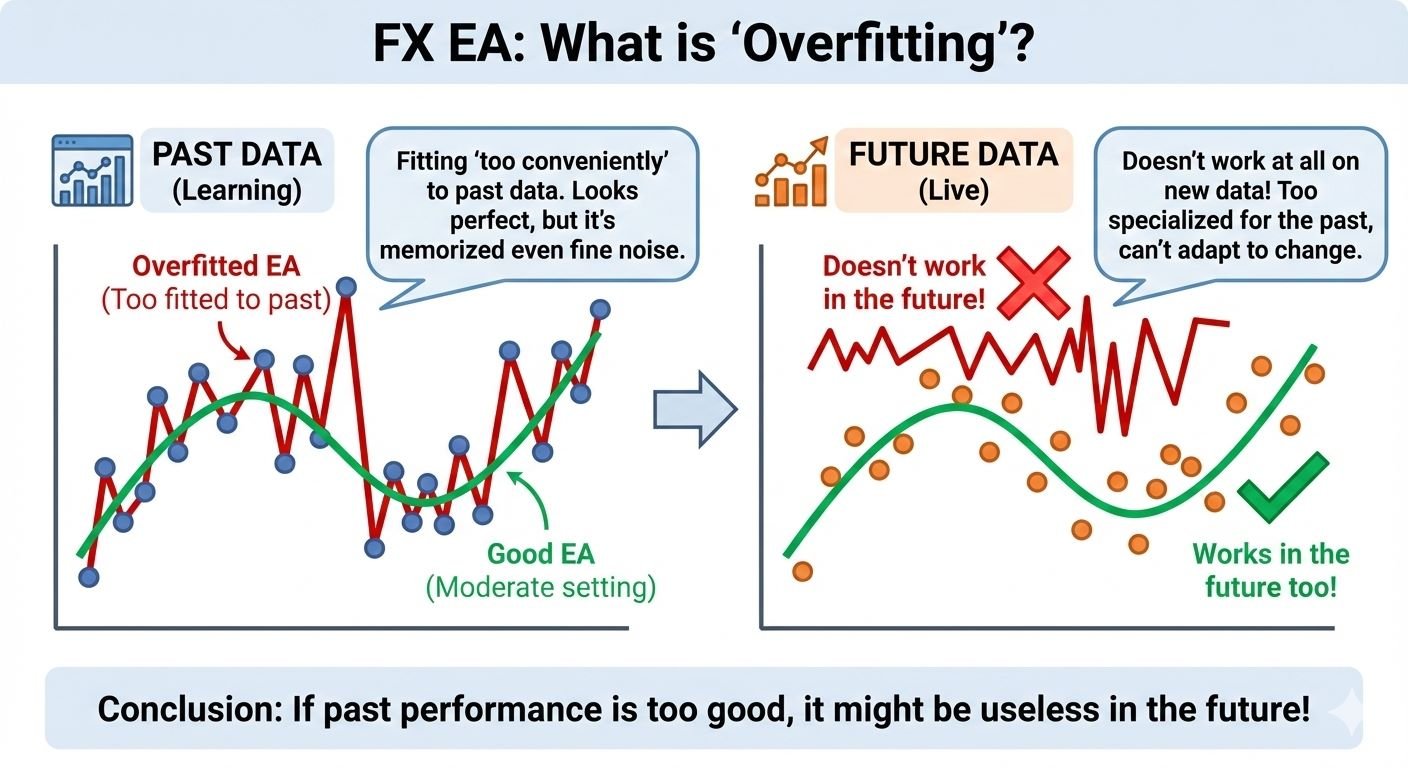

The more “perfect” an EA looks in a backtest, the more you should question it. One major reason is overfitting.

Overfitting lets an EA look “clean” by fitting past data too closely—so it often fails to hold up in future market conditions.

In this article, I’ll share practical ways to spot overfitting.

What You’ll Learn: How to Spot Overfitting in EAs

- What an overfit EA really is: why it can look “unusually strong” in backtests only

- How to detect overfitting: forward testing / trade count & test period / testing other pairs / the “AI” trap

- Pre-purchase checklist: the minimum checks beginners should do

- How to think about 5 common “validation tests”: out-of-sample / walk-forward / cost stress testing

For a general explanation of automated trading (EAs), see What Is a Forex EA (MT4/MT5)? An Automated Trading Guide.

What Overfitting Means: Why an EA Can Win Only in Backtests

Overfitting means an EA fits past market data **too closely**, then fails to repeat the same performance in future markets.

In simple terms, it’s like an EA that **memorized the answers to a test**. It can look perfect on historical charts (backtests), but struggle in new, unseen conditions (forward/live trading).

Why Overfit EAs Stop Working (The Main Reason)

Markets don’t repeat the same patterns forever. Things change—volatility, the balance of trending vs. ranging markets, news reactions, and liquidity.

Overfit EAs often pick up tiny quirks that happened “by chance” in a specific period (noise).

So when conditions shift even a little, the logic stops matching the market—and the “great backtest” can fall apart fast.

Why Overfitting Happens So Easily (The MT5 Optimization Trap)

When you build an EA, the historical price data (the “answers”) is already known.

That makes it surprisingly easy to tune parameters and create settings that win only on the past.

The more items you can tweak, the easier it becomes to find settings that match history perfectly, for example:

- Indicator periods (e.g., MA or RSI length)

- Entry/exit thresholds

- Filter rules (time window, day-of-week, spread limits, etc.)

- Stop loss / take profit / trailing rules

MT5 includes strong built-in optimization tools, so adding more conditions often produces a “perfect-looking” backtest.

But markets don’t move exactly like they did before—so the more an EA fits old noise, the more likely it is to weaken in real trading.

Common Signs of an Overfit EA (Be Careful If the Backtest Looks Too Perfect)

- In the backtest: an unrealistically smooth upward curve, very high win rate or high profit factor (PF), and very low drawdown (DD)

- In forward/live trading (or a different period): PF drops suddenly and DD jumps

- High win rate, but occasional huge losses that wipe out a lot of the gains (many small wins, then one big hit)

Forward Testing Is Non-Negotiable: Use Real Results to Catch Overfitting

Forward testing means performance from a real account (or an environment close to live trading).

If you want to avoid overfitting, forward results are the #1 thing to check.

Why? Because an overfit EA can look amazing in a backtest, then fail to repeat it in future market conditions.

Why Forward Testing Matters (Why Backtests Alone Aren’t Enough)

- Backtest = checking answers on past data (you can “build to the past”)

- Forward = a test closer to the future (the EA must handle changing conditions)

- So forward testing is the most realistic cross-check for spotting overfitting

Where to Check Forward Results (Myfxbook / MQL5 Signals)

Some EA developers publish results on third-party services.

If you’re buying an EA, first check whether forward results are public on services like Myfxbook or MQL5 Signals.

- If results are not published at all, that’s a red flag

- Also check the broker, account type, and trading cost assumptions

Note: Demo Results Are “Reference Only”

Demo accounts can behave differently from live accounts—fills, slippage, spread changes, and rejections.

So it’s not rare to see a system that looks good on demo but performs worse on live.

- Prioritize live-account forward results

- If possible, look for results from a major broker

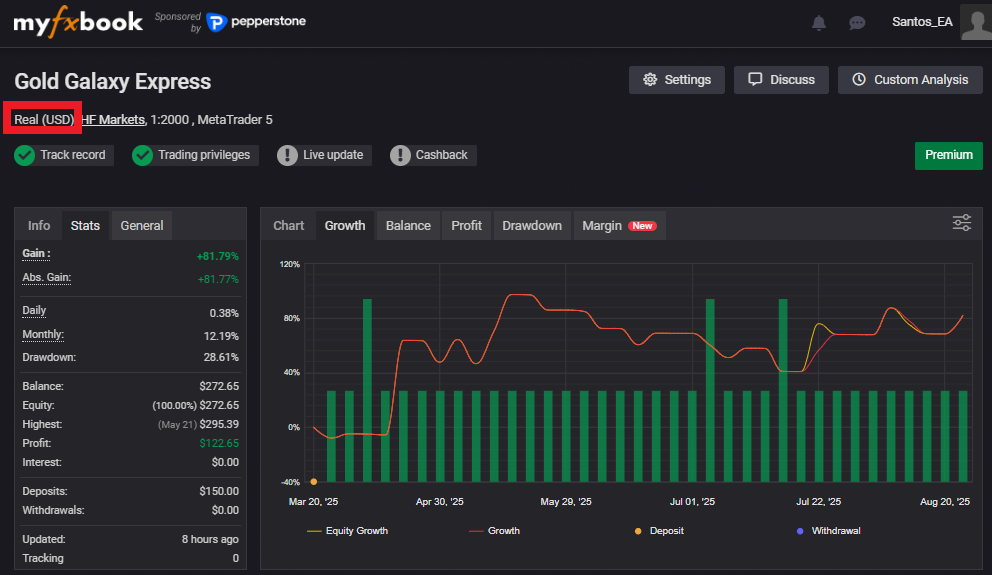

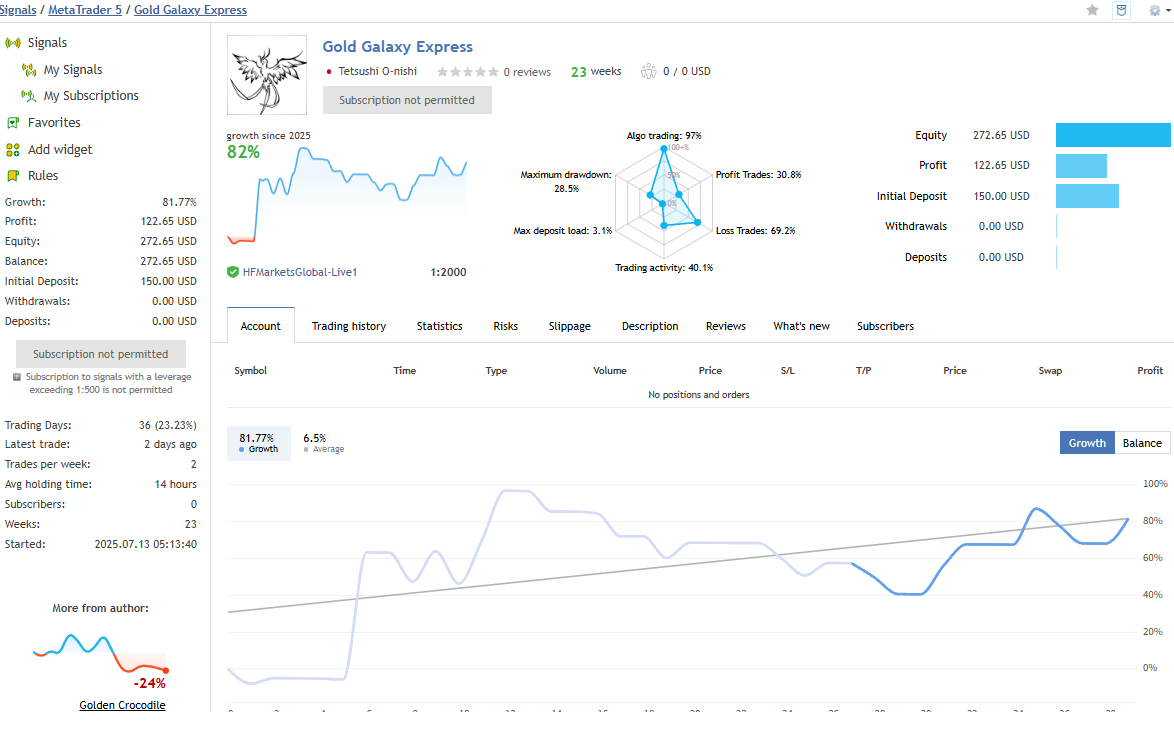

Example: Forward Result Screens (Myfxbook / MQL5 Signals)

As a reference, here are forward result screens from my own EA (Gold Galaxy Express EA) as of Aug 24, 2025.

Important: “Has Forward Results” Doesn’t Automatically Mean “Safe”

Forward results matter—but good forward performance does not guarantee the EA isn’t overfit.

Beginners often get trapped by patterns like these:

Trap #1: No Stop Loss (or an Extremely Wide One)

If an EA avoids stop losses—or sets them extremely wide—it can look “hard to lose” in the short term.

But over time, one big loss can wipe out many small gains (the classic “many small wins, one big hit” profile).

Trap #2: Averaging Down (Grid) or Martingale

Grid (averaging down) and martingale systems often produce a very smooth balance curve for a while.

But in a prolonged adverse move, they can trigger a massive loss—and in the worst case, blow the account.

What to Check in Forward Results (The Must-See Items for Beginners)

- Trade history: how losses appear (are there hidden “one-shot” big losses?)

- RR (average win ÷ average loss): a high win rate can still be dangerous if RR is tiny

- Max DD: don’t judge by short periods—watch how DD grows (any sudden spike?)

- Equity vs. Balance: a big gap can mean the strategy often holds large floating losses

Quick takeaway:

A “beautiful backtest” plus “strong short-term forward” is exactly what misleads many beginners.

Don’t decide based on results alone—check the internals (loss profile, RR, DD, and whether floating losses are being hidden).

Test Other Pairs Too: Does It Survive With the Same Settings?

A very effective way to spot overfitting is to test the same settings on different currency pairs.

Overfit EAs are often tuned too tightly to one specific pair and one timeframe.

So applying the exact same parameters to another pair can reveal whether the logic is truly robust (able to survive changing conditions).

A Simple Rule of Thumb (Easy for Beginners)

- Doesn’t collapse on other pairs → the logic may be more robust

- Looks like a miracle on one pair, collapses elsewhere → strongly suspect overfitting

The point isn’t “can it make the same profit on every pair?”

It’s whether it shows blow-up behavior when the market characteristics change.

(Profit levels naturally differ across pairs.)

Note: Some EAs Are Designed to Make This Test Impossible

Some products intentionally restrict testing on other pairs—for example, they only run on a specific symbol or under narrow conditions.

Be cautious if:

- The EA claims it works only on “this broker / this pair / this timeframe” with extremely narrow conditions

- The EA blocks or limits validation (can’t run, can’t test, or behaves abnormally on other pairs)

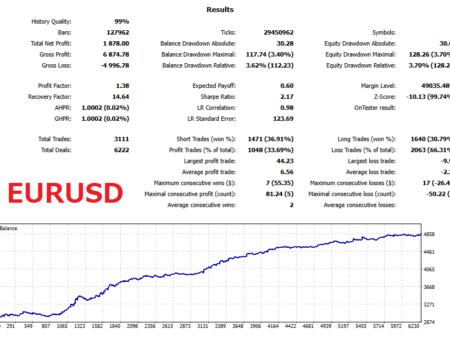

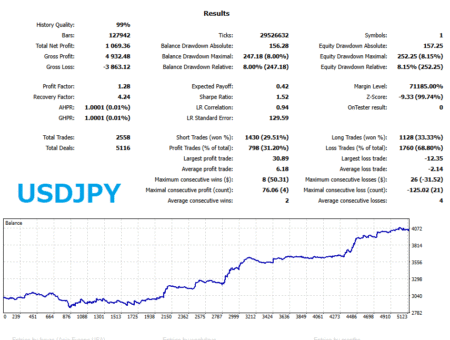

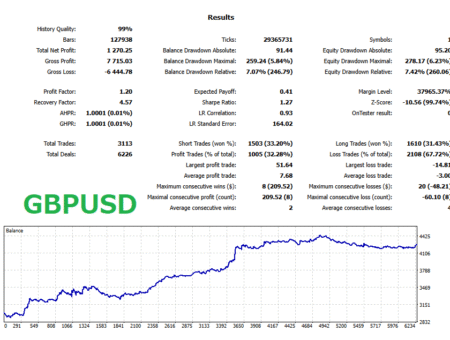

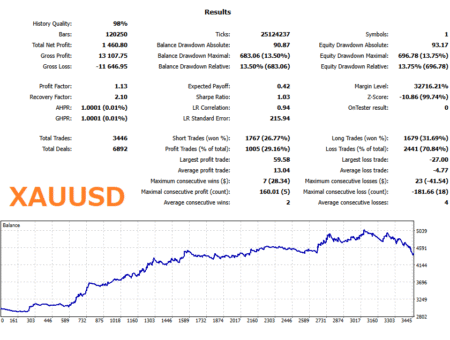

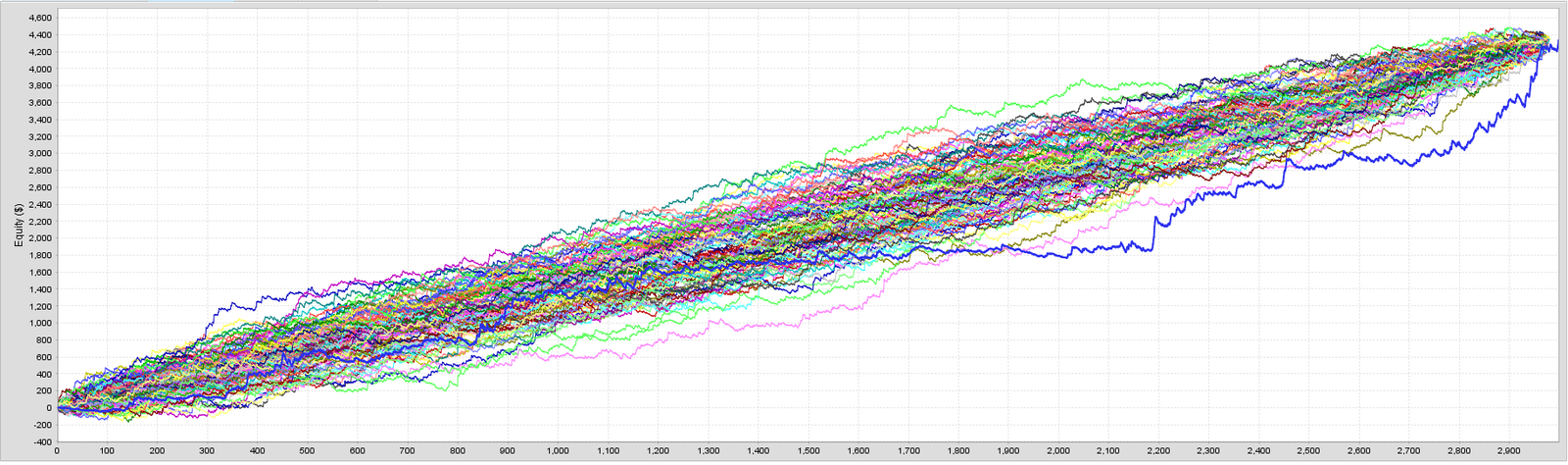

Case Study: Testing Multiple Pairs With the Same Settings

As a reference, Long Term Lobster EA was built for EURUSD,

but we ran backtests using the exact same logic and parameters on XAUUSD, USDJPY, and GBPUSD as well.

The equity curves below show broadly consistent behavior across pairs.

This suggests the strategy is less likely to be overfit and may be more generalizable.

Trade Count and Test Period: Why Small Samples Are Dangerous

When you suspect overfitting, two clues matter a lot: trade count (sample size) and how long the test period is.

The rule is simple: the more trades and the longer the period, the harder it is for “luck” and overfitting to dominate.

On the other hand, a small number of trades or a short period makes it easy to create settings that look great by chance.

Why Low Trade Counts Are Risky

With few trades, performance can look strong just because a winning streak happened to occur.

That mixes “luck” with optimization, and it becomes hard to judge whether the strategy is truly repeatable.

- Few trades but very high PF in a backtest → be careful

- Short forward history (e.g., a few weeks to a year) → still too early to judge

Rule of Thumb: Look at “Time” + “Count” Together

Don’t rely on only one. Reliability improves when the test covers both:

- A long period: includes different market regimes (trend/range, volatility shifts)

- Many trades: reduces random bias and makes the stats more stable

Important: Trade Count Should Be Measured “Per Logic”

This is where many beginners get confused.

Even if an EA shows “1,000 trades,” these two cases are very different:

- A: One single logic traded 1,000 times

- B: Ten different logics traded 100 times each (total 1,000)

In general, B is easier to overfit.

The more separate rules you combine, the easier it becomes to find a mix that matches the past—even if each logic has only a small sample.

Note: You Usually Can’t See the EA’s Internal Structure

In many cases, only the developer knows how many distinct logics are inside an EA.

So you can’t conclude that “many trades = low overfitting risk.”

Watch out for:

- EAs that are overly feature-rich (too many conditions)

- EAs that bundle multiple strategies (e.g., different rules by time window, switching logic by regime)

Even with a high trade count, the trades might be spread across multiple internal logics.

Summary:

“More trades” and “longer period” are positives, but don’t over-trust them.

If possible, judge them together with long-term forward results, trade history, and realistic cost assumptions.

The “AI EA” Trap: Why Overfitting Is More Likely

More EAs now advertise “AI,” “machine learning,” or “deep learning.”

But here’s the key point: machine-learning EAs tend to be more prone to overfitting, so you should check them more carefully than typical rule-based EAs.

Why Machine-Learning EAs Overfit More Easily

- More freedom to tune: ML can learn complex patterns, but it also learns noise

- Inputs tend to explode: many indicators, timeframes, and pairs can be added, making it easier to fit the past

- Harder to explain: decisions can become a black box, which often leads to weaker validation

A well-built ML EA can be powerful—but a poorly built one can become an EA that simply “memorized the past.”

Ignore the “AI” Label—Focus on Transparency and Repeatability

“AI” doesn’t guarantee an edge.

If anything, AI EAs require more emphasis on transparent validation and repeatable results.

Don’t buy it because it says “AI.”

Buy it only if the developer clearly shows how it was tested and what was disclosed.

Related articles:Is AI Trading Dangerous? Common Pitfalls in Machine-Learning EAs and LLM-Connected EAs (How to Spot Them)

Pre-Purchase Checklist: How to Spot an Overfit EA

Here’s a checklist to help you detect overfitting before buying an EA.

If many of these apply, the EA may look great in a backtest but still break down in real trading.

1) Is There Verified Forward Proof (Preferably a Live Account)?

If forward results don’t exist—or they often get hidden—removing that EA from your shortlist is usually the safest move.

- Are forward results publicly available on Myfxbook or MQL5 Signals?

- Is it a live account? (demo is reference only)

- Are the broker, account type (ECN/Raw, etc.), and commission model stated clearly—so you could reproduce conditions?

- Longer forward periods and more trades generally increase reliability

- Even if short-term forward looks great, check trade history for RR, loss profile, and grid/martingale signs

2) Is the Trade Count (Sample Size) Large Enough?

Smaller samples make it easier for luck and optimization “hits” to distort the stats.

- Rule of thumb: 500+ trades per single logic. Ideally 1,000+ (just a guideline)

- If PF is extremely high with only ~100–300 trades, be cautious

- Important: don’t look only at total trades—think in terms of trades per logic (too many bundled logics are easier to overfit)

3) Does Win Rate vs. RR (Risk-Reward) Look Natural?

A high win rate isn’t automatically bad.

But the higher the win rate, the more likely the strategy relies on rare but huge losses—and those can be fatal.

This type often looks great in “backtest + short forward,” then collapses over the long run.

- Win rate 80–95% with tiny RR (average win ÷ average loss) can be a danger sign

- Rough guideline: RR ≥ 1.2–1.5 (depends on strategy, but avoid extremely small RR)

- Max loss vs. average win is critical (often the most important check)

- Common danger pattern:

- Small average wins, large average losses (low RR)

- Max loss is several to dozens of times larger than the average win

- After many wins, one loss gives back a big part of the gains

This profile is common in grid/martingale systems, but some products disguise it with different-looking rules.

4) Are Trading Cost Assumptions Realistic? (Commission / Spread / Slippage)

If you can run your own backtests, use realistic trading costs.

The shorter the timeframe, the more sensitive performance becomes to cost differences.

- Does the backtest include commission?

- Is spread modeled realistically (not a “perfect fixed spread”)?

- Is slippage assumed as zero or unrealistically favorable?

If PF looks too high, question the cost assumptions first (fixed tight spread, no commission, zero slippage).

5) Is the Test Period “Conveniently Chosen”?

Sometimes an EA uses internal date filters—for example, “tested from 2010” but trades appear only after 2018.

Also, EAs with frequent long “no-trade” stretches may depend on very specific conditions.

- Is the test period too short? (longer is better; start with multiple years)

- Does it win only in a specific regime (e.g., trend-only years)?

- Are there unnatural “no trading” periods? (check chart + history)

6) Does It Avoid Blowing Up on Other Pairs? (If Possible)

If you can backtest, apply the same settings to other pairs.

Don’t focus on “same profits”—focus on whether it avoids collapse.

- If it collapses instantly on another pair → suspect overfitting

- If other-pair testing is restricted (won’t run / can’t test) → red flag

If many items look suspicious, slow down before buying.

Ideally, evaluate an EA using forward proof (preferably live) + trade history + realistic costs + reasonable period—rather than judging by pretty backtest numbers alone.

5 Common Tests Developers Use to Reduce Overfitting

EA developers often use these tests to reduce overfitting. Here are five common ones.

But as a buyer, don’t assume “they ran these tests = the EA is safe.”

These tests are still an extension of backtesting, and because they rely on historical data, it’s possible to present results in a flattering way.

Every test has weaknesses. Treat them as tools to add evidence—not as a guarantee.

The most important step is still what we covered above: check forward results and trade behavior (loss profile, RR, DD), and if possible, run your own backtests with longer periods, realistic costs, and other pairs.

1) Out-of-Sample Testing (Split the Period)

You optimize on one period, then test the same parameters on a different period.

This is the basic way to ask, “Did it just fit the past?”

- Example: optimize on 2005–2020 → test on 2021–2025

- If it breaks during the test period, it likely fit the past too closely

Limitations (Why It’s Not a Magic Proof):

- If you try enough strategies, some will “work” out-of-sample by luck (it doesn’t guarantee live performance).

- A developer can choose a “convenient split” that makes the results look better.

2) Walk-Forward Testing (Repeat “Optimize → Test” in Blocks)

Assuming market conditions change, you repeatedly optimize on one block and test on the next block.

This is closer to how systems behave in real operations.

- More realistic than a one-shot optimization

- If results swing wildly by block, the EA may be fragile

Limitations (Why It’s Not a Magic Proof):

- Just like out-of-sample, it can still “work by chance,” and block selection can be biased.

- It often assumes you will re-optimize regularly, which buyers may not be able to do consistently.

3) Parameter Sensitivity Testing (Does It Survive Small Changes?)

Stronger systems tend to survive small parameter changes.

But if only the “best” setting works and even a small change breaks it, that’s a classic overfitting sign.

- Move key parameters by ±10–20% and see whether PF/DD collapses

- If one single point is “the only good answer,” be skeptical

Limitations (Why It’s Not a Magic Proof):

- If key parameters are hidden or fixed by the developer, buyers can’t run meaningful sensitivity tests.

- Even if sensitivity looks good, don’t rely on it alone—use it with other checks.

4) Monte Carlo Analysis (Randomization): Does It Handle Order and Noise?

You shuffle trade order or add small execution noise (like slippage) to see whether the strategy breaks.

It’s useful for checking whether results depend too much on a “lucky” sequence.

- Some performance drop is normal

- If a small change leads to near-bankruptcy outcomes, the edge may be thin

Limitations (Why It’s Not a Magic Proof):

- Main limitation: In many cases, Monte Carlo analysis only reshuffles realized trade P/L. It does not reliably reflect equity drawdown that includes floating losses. In other words, balance-only views can miss the real risk.

- So even if a strategy hides “one-shot” blow-up risk (e.g., grid or martingale), Monte Carlo alone may not reveal it.

- Monte Carlo charts look professional, but they’re often just reordering trades and adding light noise to a backtest.

- If the backtest itself is overfit, Monte Carlo can still look good—while live trading can break down even harder.

- Don’t assume “the worst case is only as bad as the bottom line on the chart.” Real markets change in ways trade shuffling can’t recreate (spread spikes, bad fills, regime shifts, correlation changes, etc.).

5) Cost Stress Testing (Use Harsher Spread/Slippage)

Short-term EAs are especially sensitive to costs, so add worse conditions and check whether performance collapses.

- Widen the spread beyond expected live conditions (e.g., +20–50%)

- Add slippage (especially around news or low-liquidity hours)

- Check whether it still survives and whether DD spikes

Limitations (Why It’s Not a Magic Proof):

- Real costs change by broker and time of day, so your stress settings may still differ from reality.

- Results depend heavily on assumptions, so tests that clearly state assumptions (commission, average spread, expected slippage) are more trustworthy.

Summary: The Most Important Ways to Avoid Overfit EAs

No matter how clean a backtest looks, it doesn’t prove an EA will keep winning. Overfitting can happen easily when you tune to historical data. Results with extremely high win rates or PF and unrealistically low drawdown should be treated with extra caution.

The first thing to check before buying is verified forward performance—ideally on a live account. If forward results aren’t public, if they frequently disappear, or if the broker/account type/cost assumptions are unclear, it’s often rational to remove that EA from your list. Still, even a strong forward record doesn’t automatically mean “safe.” Review the trade history and confirm the loss profile is not dangerous (no “many small wins, one big blow-up,” no grid/martingale behavior), the win rate vs. RR balance looks natural, and the maximum loss isn’t massively larger than the average win.

Next, look at trade count and test duration. Smaller samples and shorter periods make it easier for luck and optimization to dominate. Also, don’t be fooled by “total trades.” If multiple internal logics are bundled, each logic may have a small sample, making overfitting easier even when the overall count looks large.

If possible, run your own backtests and apply realistic trading costs (commission, variable spreads, slippage). Then test the same settings on other pairs and focus less on profit size and more on whether the strategy avoids collapse. Developer tests like out-of-sample, walk-forward, sensitivity, and Monte Carlo can add evidence—but none of them are guaranteed, and they can be presented in ways that look better than reality. More than fancy charts, value clear assumptions and transparent testing steps.

In the end, don’t chase “the best numbers.” Prioritize robustness and repeatability.

When a backtest looks too attractive, pause and verify forward proof, loss behavior, cost sensitivity, sample size, and how well it survives in different conditions. That approach dramatically reduces your odds of buying an overfit EA.

FAQ

- Q. What is overfitting?

- A. Overfitting is when an EA matches past market data too closely, then fails to reproduce the same performance in future markets. Be especially careful with EAs that look great only in backtests.

- Q. If the backtest looks great, is it safe to buy?

- A. Not necessarily. A strong backtest alone can’t rule out overfitting. Check verified forward results (preferably live) and review the loss profile in the trade history.

- Q. Why is forward testing so important?

- A. Forward testing checks performance in “unknown” market conditions. Overfit EAs often look clean in backtests but break down in forward/live trading—so forward proof should be your top priority.

- Q. Can I trust demo forward results?

- A. Use them as reference only. Demo fills and spreads can differ from live conditions, and this gap is especially important for scalping EAs. Whenever possible, prioritize live-account results.

- Q. How long should a forward test be?

- A. There’s no single “safe” number of months. But a few days or weeks is usually too short to judge. Try to review at least several months and check monthly swings, max drawdown, and the loss profile.

- Q. How many trades are enough to trust the stats?

- A. There’s no absolute safety line, but as a guideline, look for 500+ trades per single logic, ideally 1,000+. If PF is extremely high with a small number of trades, suspect luck or overfitting.

- Q. Is a high win-rate EA always good?

- A. Not by itself. A high win rate with a low RR (average win ÷ average loss) can lead to “many small wins, one big loss,” where one losing trade wipes out a lot of gains.

- Q. Why are grid and martingale strategies risky?

- A. They can look strong in the short term, but losses can grow quickly when price moves against the position for a long time. Even if the balance curve looks smooth, always check equity drawdown and maximum loss.

- Q. How important are trading costs (spread, commission, slippage)?

- A. Extremely important. Short-term EAs can change performance dramatically with small cost differences. Confirm whether the backtest includes commission, uses realistic spreads, and assumes realistic slippage.

- Q. Should I test the EA on other currency pairs?

- A. If possible, yes. Apply the same settings and focus less on profit and more on whether the strategy avoids collapse. If only one pair looks amazing, suspect overfitting.

- Q. If the developer ran out-of-sample or Monte Carlo tests, is it safe?

- A. Not automatically. These are still extensions of backtesting and can be presented in flattering ways. Evaluate them together with forward results and trade history.

- Q. Do AI (machine-learning) EAs overfit more easily?

- A. Often, yes. They have more freedom to fit noise, so validation transparency matters even more—clear train/test separation and public live forward results are especially important.

- Q. What’s the fastest “minimum check” for busy people?

- A. First confirm there is verified live forward proof. Then check max drawdown, RR, and the maximum loss (how it loses). If anything looks suspicious, skipping the purchase is usually the safer choice.